Google has released Gemma 4, an open large language model that brings significant improvements to advanced reasoning and agentic workflows. Built on the same technological foundations as the Gemini 3 Pro models, Gemma 4 is natively trained on over 140 languages and available in four model sizes: Effective 2B (E2B), Effective 4B (E4B), 26B Mixture of Experts (MoE), and 31B Dense.

The 26B and 31B models are geared for frontier intelligence, with offline compute on personal systems, while the smaller parameter size E2B and E4B models are more suited for smartphones, mobile devices, and internet of things (IoT) ecosystems.

Gemma 4 is an AI processing engine, not a chatbot-esque implementation, offering flexibility to modify, fine-tune, and customise depending on specific workflows and computing needs. The models can be run locally, ensuring data privacy and cost benefits.

Google has worked closely with partners, including Qualcomm, MediaTek, and Nvidia, to release the new models under the Apache 2.0 licence, offering significant freedom for developers, researchers, and commercial entities to use, modify, and redistribute the models.

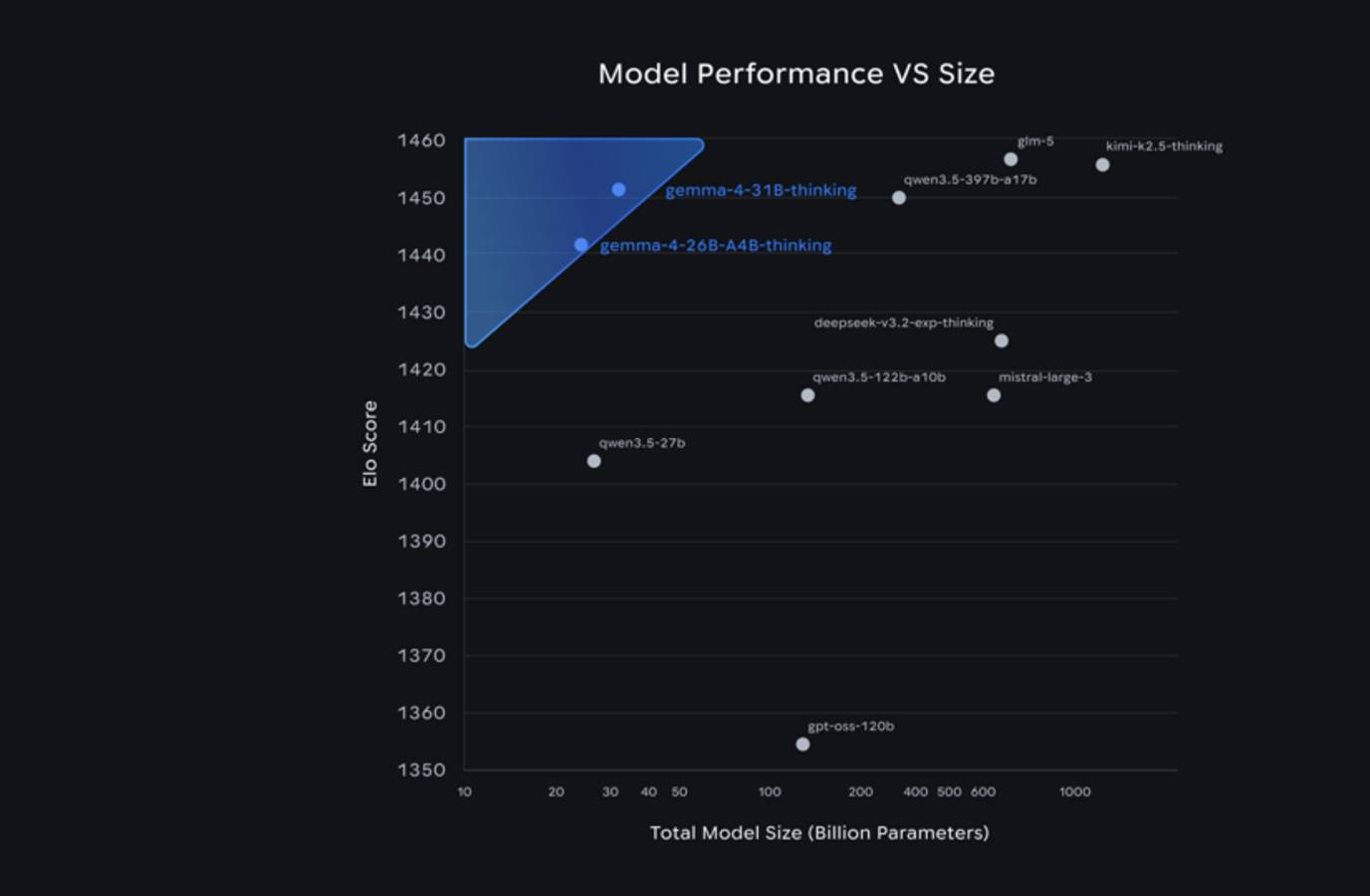

Arena AI ranks Gemma 4's 31 billion parameter model at number three, behind GLM-5 and Kimi K2.5 Thinking, while the 26 billion parameter model ranks sixth. Gemma 4 outcompetes models 20x its size and is expected to solve a wide variety of generative AI tasks with text, audio, and image input, support for over 140 languages, and a long context window.